|

On Computers

|

|

|

|

The First General-purpose Electronic Computer

In 1937, Claude Elwood Shannon, then a graduate student at the Massachusetts Institute of Technology, wrote a Master’s thesis demonstrating that the electrical application of Boolean algebra could represent and solve any numerical or logical relationship. The use of electrical switches to do logic is the basic concept that underlies all electronic digital computers.

ENIAC, short for Electronic Numerical Integrator And Computer, was the first general-purpose electronic computer. It derived its speed advantage over previous electromechanical computers from using digital electronics with no moving parts. Designed and built to calculate artillery firing tables for the U.S. Army’s Ballistic Research Laboratory, ENIAC was capable of being reprogrammed to solve a full range of computing problems.

Digital computers incorporate electrical circuits that perform Boolean logic. Boolean logic works by operating with TRUE and FALSE, or 1’s and 0’s. The basic data unit, which can have a value of 1 or 0, is called a bit. A wire that carries a certain voltage can represent a Boolean 1, and one that does not can represent a Boolean 0. Digital computers can perform very complex tasks by replicating and combining a small number of basic electrical circuits that perform corresponding Boolean functions. The most basic Boolean functions are NOT, OR, and AND. The NOT (inverter) circuit takes one bit as input and provides its opposite value as output. The OR circuit takes two bits, A and B, as inputs and provides an output C that is 1 if either A or B is 1 (or both A and B are 1), and is 0 if both A and B are 0. The AND circuit sets output C to 1 if inputs A and B are both 1, otherwise C is set to 0.

The Army contract for ENIAC was signed on June 5, 1943 with the University of Pennsylvania’s Moore School of Electrical Engineering. Developed by a team led by John Presper Eckert and John W. Mauchly, ENIAC was unveiled on February 14, 1946 at the University of Pennsylvania, having cost almost $500,000. ENIAC was shut down late in 1946 for a refurbishment and a memory upgrade, and in 1947 it was transferred to Aberdeen Proving Ground, Maryland. On July 29 of that year, it was turned on and remained in operation until October 2, 1955.

The Army contract for ENIAC was signed on June 5, 1943 with the University of Pennsylvania’s Moore School of Electrical Engineering. Developed by a team led by John Presper Eckert and John W. Mauchly, ENIAC was unveiled on February 14, 1946 at the University of Pennsylvania, having cost almost $500,000. ENIAC was shut down late in 1946 for a refurbishment and a memory upgrade, and in 1947 it was transferred to Aberdeen Proving Ground, Maryland. On July 29 of that year, it was turned on and remained in operation until October 2, 1955.

In its original form, ENIAC did not have a central memory. Its programming was determined by the settings of switches and cable connections. Improvements to ENIAC’s design were introduced over time. In 1953, a 100-word magnetic core memory was added.

ENIAC’s physical size was massive. It contained 17,468 vacuum tubes and 70,000 resistors, and weighed 30 tons. ENIAC was roughly 8-1/2 feet by 3 feet by 80 feet in size, covered an area of 680 square feet, and consumed 160 kilowatts of power. An IBM card reader was used for input, and an IBM card punch machine was used for output. The punched cards could be used to produce printed output offline using an IBM tabulating machine.

Bits, Bytes and Words

A bit, short for binary digit, is the smallest unit of information on a computer or data storage device. The term “bit” was first used in this fashion in print in 1948, in an article by Claude Shannon. A bit can hold only one of two values: 0 or 1.

Larger units of information can be represented by combining consecutive bits. A byte, composed of 8 consecutive bits, can represent binary numbers in the range 0 (00000000) to 255 (11111111). In many early computers, 8 bits (a byte) formed the natural unit of data used in computations. The term octet is also used to refer to an 8-bit quantity.

When technology advancements led to increased computer calculation and memory capabilities it became practical to develop computer architectures using larger units of data. The term “word” was used to describe the natural unit of data used in a particular computer architecture. Computer architectures have mostly used multiples of 8 bits, although some designs were developed around units of data such as 9, 10, 12, or 36 bits. Currently, most computers use 32-bit words or 64-bit words in their architectures.

A 32-bit word can represent unsigned decimal integers in the range 0 to 4,294,967,295, or signed decimal integers in the range -2,147,483,648 to +2,147,483,647 (using two’s complement arithmetic). The term megabit (Mbit or Mb) generally refers to the quantity one million (1,000,000) bits, and the term megabyte (Mbyte or MB) generally refers to the quantity one million bytes. However, the term megabit is sometimes used to refer to the quantity 210 x 210 or 1024 x 1024 (1,048,576) bits. Similarly, megabyte is sometimes used to refer to the quantity 1,048,576 bytes.

Vacuum Tube Memory

Vacuum tubes were used to provide temporary data storage (memory) in early digital computers. Pins on the tube’s base were connected via wires to internal elements arranged in grids on cathode, collector, insulator, and read-write sheets. Multiple miniature electrical circuits formed logical gates that held bits of data (1 or 0). A 16x16 arrangement could store and read back 256 bits of data. Some tubes with multiple stacked sheets could store 4096 (4 kilobits) of data. The data in memory was erased when the tube was powered off.

Punched Cards

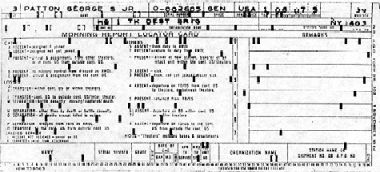

Early digital computers used punched cards for data transfer and storage. The punched card technology dates back to Herman Hollerith’s patent of 1884. Each card had 80 columns arranged in 12 rows. The punches in each column coded for an alphanumeric character. The rightmost 8 columns were sometimes reserved to assign card sequence numbers, and if so up to 72 data characters could be coded for in one card. The characters could be printed on the cards, so humans as well as computers could read them. The cards were made of heavy, stiff paper, and were 3-1/4 inch x 7-3/8 inch in size.

Early digital computers used punched cards for data transfer and storage. The punched card technology dates back to Herman Hollerith’s patent of 1884. Each card had 80 columns arranged in 12 rows. The punches in each column coded for an alphanumeric character. The rightmost 8 columns were sometimes reserved to assign card sequence numbers, and if so up to 72 data characters could be coded for in one card. The characters could be printed on the cards, so humans as well as computers could read them. The cards were made of heavy, stiff paper, and were 3-1/4 inch x 7-3/8 inch in size.

The First Transistors

The first transistors were developed at AT&T’s Bell Laboratories by Walter Brattain, John Bardeen, and Robert Gibney in 1947. The initial semiconductor devices, point contact electronic amplifiers, were made of germanium, plastic and gold.

In 1951, Bell Laboratories developed a junction transistor made of sandwiched semiconductor layers using germanium, gallium and antimony in its construction. This new type of transistor, following a concept envisioned by Robert Shockley, was a more efficient amplifier than the point contact type.

Under licenses granted by AT&T, hundreds of companies soon began to manufacture durable and energy-efficient transistors that began to replace fragile vacuum tubes in telephone equipment, computers, and radios. In 1954, Gordon Teal, then working at Texas Instruments, developed the first practical transistor that used silicon instead of germanium. The silicon transistors that Texas Instruments produced had an advantage over the earlier germanium transistors, in that they were less likely to fail at high temperatures.

Solid State (Core) Memory

In the early 1950’s, An Wang, working at Harvard University, and Jay Forrester, at MIT, contributed to the development of a practical magnetic core computer memory. With no moving parts, it was highly reliable. This form of solid state memory was used to provide main memory storage for a computer’s central processing unit. Small ferrite rings (cores) were held together about 1 mm apart in a grid layout, with wires woven through the middle of the rings. Each ring stored one bit (a magnetic polarity). By applying currents to wires in particular directions, magnetic fields were induced that caused a core’s magnetic field to point in one direction or another. Once set, the magnetic polarity remained after electric current was removed. Current applied to selected wires was used to set, read and reset specific cores in the grid.

Early Computers

In 1946, Engineering Research Associates (ERA) was developing purpose-built code-breaking machines for the U.S. Navy in St. Paul, Minnesota. Their activities led ERA to develop expertise in magnetic drum and paper tape data storage devices. The Navy awarded ERA a contract to develop a programmable code-breaking machine in 1947. The resulting device, the ERA Atlas, completed in 1950, was the first stored-program electronic computer. ERA marketed it as the ERA 1101.

The principal developers of the ENIAC formed the Eckert-Mauchly Computer Corporation (EMCC), a private venture for the development and marketing of computers. Remington Rand, a major manufacturer of typewriters, bought EMCC in 1950. The Bureau of the Census contracted with EMCC to develop a computer to facilitate census tabulations, and this work led to the Universal Automatic Computer (UNIVAC) machine, which became available in 1951. The UNIVAC I was a stored-program computer. Its metallic tape drives could store 10 megabits of data in each removable reel. Using mercury delay line memory, the UNIVAC I, which was water-cooled, had 5,200 tubes and 18,000 germanium diodes. The entire system weighed nearly 15 tons and consumed 125 kilowatts of power.

In 1952, Remington Rand acquired ERA, and in 1953 the former ERA and EMCC entities were merged to form the UNIVAC division of Remington Rand. The UNIVAC II mainframe computer, first marketed in 1958, had a magnetic core memory of up to 10,000 12-character words, magnetic tape data storage drives, and processing supported by improved vacuum tube technology (5,200 tubes) and early transistorized circuits (1,200 transistors). The UNIVAC II could execute 580 multiply or 6,200 add operations per second.

During the Korean War, International Business Machines (IBM) developed the Defense Calculator computer for the U.S. Government. This work led to the introduction of the IBM 701 in 1952. This computer had addressable electronic memory and used magnetic tapes and a high speed card reader.

During the Korean War, International Business Machines (IBM) developed the Defense Calculator computer for the U.S. Government. This work led to the introduction of the IBM 701 in 1952. This computer had addressable electronic memory and used magnetic tapes and a high speed card reader.

Working for the IBM Applied Science Division, J.W. Backus, H. Herrick, and I. Ziller developed a specification for the Mathematical Formula Translating System (FORTRAN) in 1954. FORTRAN was designed so that it could be directly used by engineers and scientists, rather than require specialized skills in machine-language programming. FORTRAN was the first rigorously defined scientific programming language. It was used in the IBM 704 computer introduced in 1954. The IBM 704 had core memory, hardware support for floating point arithmetic, and could execute up to 40,000 instructions per second.

Operation of Early Computers

Initially, computer users relied on computer operators and support personnel to communicate with the computers. Computer users would typically prepare inputs by writing on printed forms. The forms were taken to punch card machine operators that used them to generate computer input cards. The input cards were assembled sequentially in card trays and delivered to the computer room, where computer operators would add any necessary computer instructions and use a card reader to feed the machine-readable cards to the computer, causing a specific computer program to be executed.

Computer jobs would execute one at a time. Execution of jobs using the same basic computer setup would take place as a batch, in a sequence determined by the operator. The operator would monitor job execution and could interrupt or re-execute jobs as necessary. Job output would be in the form of computer-generated punched cards, which could then be input to a printer to obtain printed output. A courier would return the submitted inputs and deliver the printed job output to the user.

Computers and their peripheral equipment, such as card readers and tape drives, consumed significant amounts of electrical power and often required special air-conditioning equipment.

The DATAR Project

In 1949, the Canadian Navy and its contractor Ferranti Canada initiated work on Digital Automated Tracking and Resolving (DATAR), a computerized battlefield information system. DATAR was to provide commanders with a current picture of tactical and strategic situations, to provide weapons control systems with necessary information to assist in target designation, and to direct ships and aircraft. DATAR’s computer used 30,000 vacuum tubes and a magnetic drum memory. Prototype testing was successful, but pointed out the relatively low mean time between failures of vacuum tubes. The project was cancelled in 1954 due to escalations in the estimated cost of deployed systems, but it produced a technology base that inspired later work along similar lines by Canadian, British and United States military organizations.

One result of the Canadian work on DATAR was a device for indicating position, called a trackball. It was invented in 1952 by Tom Cranston, Kenyon Taylor and Fred Longstaff. The trackball allowed the control of a cursor by manipulating a ball housed in a socket containing sensors. Because DATAR was a secret project, the initial trackball device was not patented.

Magnetic Tape Data Storage

Magnetic tape began to be used to store data in the 1950’s. A powerful electromagnet was used to write data on a layer of ferromagnetic material on an acetate or polyester film. Once written, the data record remained readable for a long time (in excess of ten years). The data could be read, erased, or rewritten electromagnetically, so that a tape could be reused many times. A 1,200 foot roll of 7-track 1/2 inch magnetic tape could store 5 megabytes of data, which was orders of magnitude more than could be practically stored on paper tape or punched cards. Magnetic tape was widely adopted for mainframe computer data storage. It was economical and remained a leading form of mainframe computer data storage until the late 1980’s. By then, 2,400 foot 9-track tapes could store 140 megabytes of data.

Magnetic Disk Data Storage

IBM developed magnetic disk data storage in the mid-1950’s. Data was written and read electromagnetically from material on the disk surface. The disk was spun at high speed and the read-write heads moved on an arm to appropriate locations on tracks on the disk. This technology allowed rapid access to any location on any track on the disk, which resulted in much faster data access times than tapes, which required winding of the tape for access to specific sequentially-stored data. Discs were assembled in packs with initial storage capacities of 5 megabytes of data.

Hardware, Software and Firmware

The first computers were all hardware. That is, they comprised only electronic and electromechanical elements, like vacuum tubes, voltage regulators, wiring, and switches. Each time a computer was “run” the operator had to preset the instructions to be followed, usually via switch settings. When the computer was turned off, the instructions, its program, vanished. The initial version of ENIAC operated in this way.

The development of reliable data storage devices and of stored-program computers led to a new class of computer attribute: the set of stored computer instructions. This set of instructions originally mimicked the machine instructions that were formerly conveyed by operator-set switch positions. The computer instructions were no longer ephemeral, they were preserved in some form of permanent storage, originally punched cards or paper tapes.

Early stored programs were written in machine language which was particular to the specific type of computer. Computer languages were developed to facilitate computer usage and enhance portability of programs between different computer types. In 1958, John Wilder Tukey, a statistician, used the term “software” to refer to the intangible elements of a computer in an article published in American Mathematical Monthly. Software is now used to refer to computer programs in general, both applications, such as MS Word or MS Paint, and operating system software, such as Unix or MS Windows.

In 1967, Ascher Opler published an article, Fourth-Generation Software, in Datamation magazine in which he used the term “firmware” to refer to the microcode used to define a computer’s fixed instruction set. Firmware is a term now used to refer to software stored in non-volatile computer memory. Firmware is programs and data either permanently stored in memory that cannot be altered, or stored in a form of semi-permanent memory that can only be changed by using a special process.

The First Integrated Circuits

In 1959, Jack Kilby, at Texas Instruments, and Robert Noyce, at Fairchild Semiconductor, independently developed ways of building entire circuits, that could incorporate transistors, resistors, capacitors and internal connectors, in a single silicon chip. The miniature integrated circuits were made by building up etched layers of silicon semiconductor crystals.

Integrated circuit chips could be cheaply mass produced, and consumed much less power than equivalent circuits made by wiring together on a board larger transistors, resistors, and capacitors. The availability of integrated circuits led to great reductions in the size of general-purpose computers. They also greatly increased their capabilities. Embedded purpose-built chips were used to provide automated control and added functionality in a variety of electromechanical devices.

Embedded Computers

In 1955, North American Aviation’s Autonetics division developed a solid-state guidance computer for the Navajo cruise missile. Autonetics went on to work on stellar and inertial navigation systems for US Navy ships and Air Force aircraft and missiles. In 1961, Autonetics developped an embedded computer to perform automatic guidance functions for the Boeing Minuteman intercontinental ballistic missile. Originally, the Minuteman’s Autonetics D-17 computer used transistors for its processor and a hard disk for main memory. Autonetics later provided an embedded microcomputer for the Minuteman II missile. The miniaturization and reduction in cost of computer components made it possible in later applications to embed integrated processors in industrial machines and in mass-produced consumer products.

In current applications, embedded computers are “computers on a chip” used in apparatus such as radar detectors, cameras, television remote controllers, unmanned aerial vehicles, cell phones, robots, and engine controllers. They are used to perform pre-programmed control or regulation tasks, often in devices that are expected to operate continuously for years. Modern embedded computers can be broken into two broad classes: pre-programmed microprocessors and microcontrollers. Microcontrollers incorporate peripheral functions on the chip.

Unlike general-purpose computers, which are adaptable to a wide range of uses, embedded computers are dedicated to a specific mechanism. The interfaces of embedded computers to the environment or user are predefined; they are otherwise self-contained. Although specific embedded computer hardware may be applied to multiple devices, the programming and data, typically firmware, are tailored to a specific function and mechanism.

Development of Computer Terminals

Electromechanical machines, such as those produced by the Teletype Corporation, first superseded the use of printed cards for computer input and output. By the mid-1960’s, Teletype machines were being used to interface electronically with computers and produce printed output. The terminals had data transmission rates of about 10 characters per second and had keyboards that could be used to type inputs for the computer. Devices of this type permitted remote control and communication with computers.

Electromechanical machines, such as those produced by the Teletype Corporation, first superseded the use of printed cards for computer input and output. By the mid-1960’s, Teletype machines were being used to interface electronically with computers and produce printed output. The terminals had data transmission rates of about 10 characters per second and had keyboards that could be used to type inputs for the computer. Devices of this type permitted remote control and communication with computers.

Teleprinters were the first interactive computer terminals. Computer output was typed on paper. A special printer character would prompt the user to type a text command. A machine-readable paper tape was used to record computer output and to prepare lengthy computer inputs offline.

Paper tape was a more compact form of permanent data storage than punched cards. Punched paper tape was used for data storage as far back as 1846, when Alexander Bain devised its use for the printing telegraph. The data tape had an endless row of small holes along its length for spooling. Alphanumeric characters were coded in columns of punches arranged perpendicular to the tape’s lengthwise axis.

In the late 1970’s, advances in integrated circuit technology and in communications led to the development of computer terminals incorporating cathode ray tube (CRT) displays. Manufacturers like DEC and IBM produced terminals that had keyboards similar to the earlier teleprinters and incorporated the circuitry necessary to drive text CRT displays and to communicate interactively with remote mainframe computers via special connections and protocols. In the early 1980’s, advances in magnetic disk and magnetic tape data storage made it possible to store computer inputs and outputs in centralized data storage facilities and resulted in the phasing out of punched cards and paper tape for data storage.

The word Modem is an acronym for modulator-demodulator, a device for transmitting digital data over a communication network. The first modems supported communications between computers and devices such as computer terminals over telephone lines. In the 1970’s, acoustic coupler modems transmitted data at 300 bits per second (bps). Later high speed modems increased the rate of transmission by several orders of magnitude.

Development of a Graphical User Interface

Stanford Research Institute (SRI), working for the Defense Department’s Advanced Research Projects Agency (ARPA) on shared computer information, developed a user interface that incorporated graphics. A CRT screen displayed information, and an electromechanical device was used to navigate with simple hand motions through graphical information shown on the computer screen. In 1967, Douglas Engelbart demonstrated an X-Y position indicator which tracked motion through two wheels that contacted the surface on which it rested, and an idler ball bearing. The original hand-held device had a cord coming out of the back, like a tail, and became known as a computer mouse. The mouse served a function similar to the earlier trackball, but was generally found easier to use.

In 1972, William English, who had earlier worked in Engelbart’s team at SRI but had moved on to Xerox, developed an improved computer mouse that used a rolling ball instead of the two wheels of the original mouse. The first general use of the mouse as a pointing device was in the Xerox Alto, an early personal-use computer utilized within the Xerox Palo Alto Research Center (PARC) organization and at several American universities beginning in 1973.

The Alto processor was the size of a small file cabinet and had 128 (expandable to 512) kilobytes of main memory, a hard disk with a removable 2.5 megabyte cartridge, and an Ethernet connection. A keyboard, mouse, and CRT display provided the user interface. Rather than using dedicated hardware for most input/output functions, as was then common, the Alto utilized microcode in its central processor unit. Software programs for the Alto computer were written in the Basic Combined Programming Language (BCPL) and Mesa programming languages. The Alto provided an integrated graphical user interface in a format similar to later workstations. Xerox managers did not develop the Alto as a commercial product, possibly because of its high cost, about $32,000.

Microprocessors

The basic functional characteristics of a computer’s central processing unit are the abilities to perform mathematical and logical operations, and to read and write data to memory. Since integrated circuits could be built to contain entire circuits, including transistors and other components, it was reasonable to attempt to build a computer’s central processing unit in a single silicon chip.

The Intel Corporation, founded by Robert Noyce and Gordon Moore, succeeded in 1971 in building a complete computation engine, a microprocessor, on a single chip. This initial device, the Intel 4004, had limited capabilities (it could only add and subtract), but it was very small (1/8 x 1/16 inch), incorporated 2,300 transistors, and consumed little power. It was used by Busicom, a Japanese company, in one of the first portable electronic calculators.

The Intel Corporation, founded by Robert Noyce and Gordon Moore, succeeded in 1971 in building a complete computation engine, a microprocessor, on a single chip. This initial device, the Intel 4004, had limited capabilities (it could only add and subtract), but it was very small (1/8 x 1/16 inch), incorporated 2,300 transistors, and consumed little power. It was used by Busicom, a Japanese company, in one of the first portable electronic calculators.

Intel’s 4004 was a 4-bit computer, that is, it operated on 4 bits (the 1’s and 0’s of binary numbers) at a time. By 1974, Intel’s continued development of the microprocessor led to the introduction of the Intel 8080, an 8-bit computer on one chip incorporating 6,000 transistors. The availability of relatively powerful microprocessors (the Intel 8080 could execute 640,000 instructions per second) led to rapid advances in computers and other electronic devices.

Microcomputers

The military and large corporations like AT&T and IBM funded research and development in computer technologies. Academics and small firms sometimes became participants in groundbreaking projects and learned or helped develop the new technologies.

The commercial availability of microprocessors facilitated the development of small home-built computers. In the early 1970’s various firms began to market drawings and instructions for building small computers, and hobbyists purchased microprocessors and other parts and built their own microcomputers at home. The earliest units provided only lights and switches for operator interface, and had to be programmed in machine language, but soon compatible monitors, keyboards and tape and floppy disk drives were developed. Even the most primitive tabletop microcomputers were faster and more reliable than early computers like the ENIAC.

The BASIC (Beginner’s All-purpose Symbolic Instruction Code) computer language, originally developed in the 1960’s by John George Kemeny and Thomas Eugene Kurtz at Dartmouth College, England, allowed operators without a deep technical and mathematical background to write their own computer programs. Originally a compiled language, BASIC was developed into an interpretive language, that is, it was made interactive, provided friendly error messages, and responded quickly for small programs.

A number of start-up companies in the United States began to manufacture microcomputers for small business and hobbyist use. These desktop machines supported services such as accounting, word processing and data management for enterprises that could not afford a mainframe computer and its operating staff. The availability of interactive BASIC facilitated the development of custom applications.

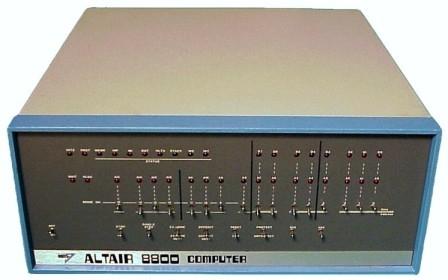

The MITS Altair 8800 microcomputer, with an Intel 8080 8-bit microprocessor, became available in 1975 in basic kit form for $439. In its initial basic form, the computer’s only operator interface was through lights and switches. Soon additional memory and other components were made available by Altair. Altair would also sell assembled components.

The MITS Altair 8800 microcomputer, with an Intel 8080 8-bit microprocessor, became available in 1975 in basic kit form for $439. In its initial basic form, the computer’s only operator interface was through lights and switches. Soon additional memory and other components were made available by Altair. Altair would also sell assembled components.

Microcomputers like the Altair 8800 required a certain degree of technical ability from the user, who was expected to buy and install additional components as required. When the microcomputer was powered up, an initiating program in hardware memory would read the operating system from a reserved area at the beginning of a floppy disk. The Control Program for Microcomputers (CP/M) operating system, developed by Gary Kildall of Digital Research for the Intel 8080 microprocessor, was used in the Altair 8800 and other microcomputers of that era. CP/M provided a command line interface to the operator. When Altair BASIC, developed by William Gates and Paul Allen, became available, it greatly expanded the functionality and consumer base of the microcomputer.

Magnetic Tape Cassettes

The Compact Cassette was developed by Philips in the 1960’s. It was widely used for music recording and playback in the 1960’s and 1970’s. The cassette housed a thin roll of tape with a capacity to store around 1 million bytes of data. Their small size made Compact Cassettes easy to handle and move about. They were used by personal computers like the Commodore 64 for data storage and transfer.

IBM PC

In 1980, after observing the growing microcomputer market for some time, IBM decided to assign a small group of engineers to work on the development of a new machine. The work was carried out secretly in Boca Raton, Florida, far from IBM’s New England headquarters. The budget allocated to this project was minimal, since top IBM executives remained focused on mainframe computers and peripheral equipment marketing and were opposed to the new venture.

Lack of funds for research and development forced the IBM developers to build the new microcomputer using available components. One component selected was the 4.77 MHz Intel 8088 microprocessor, which was already being used in an IBM typewriter. IBM contacted William Gates, head of a startup company named Microsoft, to talk about home computers and the use of Microsoft BASIC in the evolving IBM microcomputer. Microsoft became essentially a consultant to IBM on the microcomputer project.

In July of 1980, IBM representatives met with Gates to talk about an operating system for the evolving IBM microcomputer. IBM had earlier negotiated for the operating system CP/M, but had been unable to close a deal with Digital Research. Microsoft purchased the operating system QDOS from Seattle Computer Products and hired Tim Paterson, the principal author of QDOS, to develop the Microsoft Disk Operating System (DOS). Gates agreed to license Microsoft DOS to IBM at a low price, with the proviso that it be provided as original equipment in all the new IBM microcomputers. Microsoft retained the rights to market Microsoft DOS separately from IBM.

The first IBM PC ran on the Intel 8088 microprocessor. The PC came equipped with 16 kilobytes of memory, a drive for a removable 160k floppy disk, a monochrome display, a small speaker, the Microsoft DOS operating system, and Microsoft BASIC. A color display, a small printer, additional memory (to 256k), a second floppy disk drive, the CP/M operating system, and some software programs, like games and the spreadsheet VisiCalc, were available from IBM. The price of the basic PC model was $1,565, and options could push the price well over $2,000.

The first IBM PC ran on the Intel 8088 microprocessor. The PC came equipped with 16 kilobytes of memory, a drive for a removable 160k floppy disk, a monochrome display, a small speaker, the Microsoft DOS operating system, and Microsoft BASIC. A color display, a small printer, additional memory (to 256k), a second floppy disk drive, the CP/M operating system, and some software programs, like games and the spreadsheet VisiCalc, were available from IBM. The price of the basic PC model was $1,565, and options could push the price well over $2,000.

These new machines were not significantly superior to other contemporary microcomputer designs, but they carried the IBM imprimatur, were well integrated, had well-crafted user manuals, and were offered through standard marketing channels, such as Sears. They were immensely popular. Microsoft’s contracting to provide DOS as original equipment in all IBM PC’s turned out to be a brilliant move, as it meant that CP/M and other operating systems could only be bought at extra cost, whereas DOS was “free.” Microsoft’s DOS eventually became the dominant microcomputer operating system.

IBM later introduced improved models of the PC, like the XT, with hard disk drives, modems for network access, and broader software options, including the very popular spreadsheet Lotus 1-2-3. IBM also offered a plug-in portable PC version. The IBM PC’s open architecture and its use of standard components made it possible for many independent software and hardware manufacturers to develop add-on or compatible products. Spreadsheet, word-processing and game programs, displays, mice, modems, and other hardware and software products were made available by start-up companies growing in the PC’s coattails.

Commodore 64

Commodore International first released the Commodore 64 in 1982. This machine was a successor to the earlier Commodore VIC-20 and Commodore MAX. The Commodore 64 sold for $595 and incorporated a MOS Technology 6510 processor. It came with 64k memory, BASIC, supported sound and 16-color graphics, and was compatible with any television set.

The Commodore 64 was a best seller. It was sold through authorized dealers and through retailers such as department stores and toy stores. A great number of software programs were developed for the Commodore 64, including business applications, games, and development utilities. Before it was discontinued in 1995, 30 million Commodore 64’s were sold.

Compaq Portable Computer

In 1982, soon after the IBM PC was introduced, Rod Canion, Jim Harris and Bill Murto left Texas Instruments and formed the Compaq Computer Corporation. The first Compaq product was sketched on a paper place mat in a pie shop in Houston, Texas. It was a portable close copy of the IBM PC, with many open source components identical to those in the IBM machine. Compaq programmers reverse-engineered the proprietary IBM PC Basic Input/Output System (BIOS), the hard-coded program used to identify and initiate component hardware so that software can load and execute on the machine. The Compaq BIOS allowed Compaq machines to be compatible with components designed for the IBM PC.

The Compaq Portable weighted 28 pounds and sold for $3,590, with two 320k floppy disk drives, a 9-inch monochrome display, Microsoft DOS, and the ability to run all of the IBM PC software. The machine was self-contained, but had to be plugged in to a power outlet to operate, as it had no batteries. The fledging company sold 53,000 Compaq Portables in 1983, their first year of operation.

Floppy Disks

The first read-write floppy disks were introduced in the early 1970’s. The flexible disk was housed in an envelope, 8 inches square, made of thick paper. It could be inserted in a slot in a fixed drive with read-write heads that spun the disk and wrote electromagnetically on its surface. The 8-inch disks had a capacity of 256 kilobytes. A smaller 5-1/4 inch single-sided floppy disk format was available by 1980, with an initial capacity of 160 kilobytes. A double-sided 5-1/4 inch disk format was later introduced, with a capacity of 360 kilobytes.

In 1986, a 3-1/2 inch floppy disk format was introduced, first with a 720 kilobyte, and later with a 1.44 megabyte capacity. These devices had a sturdy hard plastic housing for the floppy disk in place of the thick paper envelope of the earlier 5-1/4 inch format. The 3-1/2 inch format had a slideable write-protect tab. When the disk was inserted in the drive, the metal cover was moved aside, allowing the read-write heads access to the magnetic recording surfaces. Although floppy disks have been largely superseded by later technologies, some 3-1/2 inch drives remain in use, more than 20 years after their introduction. They are very economical and allow transfer of data from older machines that do not have USB ports or CD/DVD read-write drives.

In 1986, a 3-1/2 inch floppy disk format was introduced, first with a 720 kilobyte, and later with a 1.44 megabyte capacity. These devices had a sturdy hard plastic housing for the floppy disk in place of the thick paper envelope of the earlier 5-1/4 inch format. The 3-1/2 inch format had a slideable write-protect tab. When the disk was inserted in the drive, the metal cover was moved aside, allowing the read-write heads access to the magnetic recording surfaces. Although floppy disks have been largely superseded by later technologies, some 3-1/2 inch drives remain in use, more than 20 years after their introduction. They are very economical and allow transfer of data from older machines that do not have USB ports or CD/DVD read-write drives.

Personal Computer Graphical User Interface

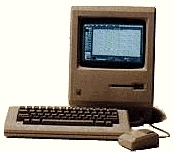

In 1984, Apple introduced the first personal computer graphic user interface in its Macintosh computer. Prior to this, the primary computer-user interface was command-driven. The Macintosh had an 8 MHz Motorola 68000 central processor. It came in a small beige case with a monochrome monitor and a 400 kilobyte 3-1/2 inch floppy disk drive, and sold for $2,495. The graphic user interface presented the user with a simulated desktop with icons for objects such as files and a wastebasket. The user could use the mouse to point and click on an object and drag it to a different location, and to cut and paste text. Windows could be opened, objects could be manipulated (a file could be dropped inside a folder), windows could be resized, and made to vanish.

In 1984, Apple introduced the first personal computer graphic user interface in its Macintosh computer. Prior to this, the primary computer-user interface was command-driven. The Macintosh had an 8 MHz Motorola 68000 central processor. It came in a small beige case with a monochrome monitor and a 400 kilobyte 3-1/2 inch floppy disk drive, and sold for $2,495. The graphic user interface presented the user with a simulated desktop with icons for objects such as files and a wastebasket. The user could use the mouse to point and click on an object and drag it to a different location, and to cut and paste text. Windows could be opened, objects could be manipulated (a file could be dropped inside a folder), windows could be resized, and made to vanish.

In 1985, Microsoft introduced Windows 1.0, an operating system that first provided for IBM PC’s and compatible computers a graphical user interface with some of the functions offered in the Apple Macintosh, and the ability to run several applications concurrently. Windows 2.0, introduced in 1987, provided an enhanced graphics interface, where users could overlap windows and control screen layout. In 1990, Microsoft introduced Windows 3.0, with enhanced colors, improved icons, and program, file and print manager utilities.

Hard Drives

In 1980, Seagate Technology introduced the ST-506, a 5-1/4 inch rigid (hard) disk drive with a capacity of 5 megabytes. It had significantly faster data access times and five times the data storage capacity of contemporary floppy disk drives. A more-compact 3-1/2 inch hard drive format was first produced by Rodime in 1983. These rigid disk storage devices were not removable. They were not intended as replacements for removable floppy disk drives, but as a means of rapidly storing and retrieving data stored in the local machine.

Modern hard drives are rigid magnetic disk drives using sealed disks of aluminum alloy or glass coated with a magnetic material. The disks are spun at very high speeds (7,000-15,000 rpm) and are written to and read from by read-write heads that come very close but do not touch their surface. A microprocessor-controlled actuator arm moves the head to the desired position on the disk. For personal computers, the most common format is the 3-1/2 inch form factor, equivalent in external size to a 3-1/2 inch floppy disk drive. In 2008, available hard disk drives for personal computers have capacities of 120 gigabytes (120,000,000,000 bytes) to 750 gigabytes.

Viruses, Trojans and Worms

A computer virus is a program that can replicate itself and potentially spread to other computers. The concept of a computer program that could replicate itself was first described in 1949 by John Louis von Neumann in lectures at the University of Illinois on the Theory and Organization of Complicated Automata.

In 1971, Robert Thomas, working at BBN Technologies, wrote a functional self-replicating program, the Creeper virus. Creeper used the ARPANET network to transmit copies of itself to DEC PDP-10 computers incorporating the TENEX operating system. Virus programs are typically designed to attach themselves to files that are executed by legitimately installed programs. The 1977 novel The Adolescence of P-1, by Thomas Ryan, described a fictional self-aware intelligent program, functionally a computer virus, that used telecommunication links to spread its control to various remote computers.

A Trojan is a computer program that includes a hidden malicious capability. (The name Trojan is derived from the Iliad account of a large wooden horse left near the gates of Troy to trick the Trojans into moving concealed Greek warriors inside city walls.) The Trojan may appear to perform a useful or benign function (and may indeed do so), but characteristically executes a covert malicious operation. A Trojan may replicate itself and may gather information for transmission to an external agent. It may be propagated via a network connection or via a data storage device (a data disk or flash memory module).

A computer worm is a program that makes use of operating system or application program vulnerabilities to spread to other computers. Worms replicate by using computer networks to transmit copies of themselves. Worms may carry payloads that perform malicious functions such as installing backdoors (illicit entry points) to a computer that can be used by an external agent to remotely control the infected computer.

The Stuxnet worm, introduced in 2009, caused Iranian computers controlling Uranium enrichment to direct thousands of centrifuges to self-destruct through overspeed. Stuxnet was adaptable and incorporated self-replication, remote communication, and data and program control functions. Its objective was the covert control of specific programmable controllers in Siemens control systems at Iranian nuclear weapons development facilities.

Antivirus Programs

Antivirus programs function to detect and erase or incapacitate computer viruses and other malicious programs. The antivirus program typically scans computer memory to detect viruses by comparing characteristics to known virus features, or monitors program activity to detect suspicious performance. The most common detection methodologies are signature-based threat detection and behavior-based recognition. Once the malicious program is identified, the antivirus software takes automatic action to remove or deactivate the malicious program, or informs the user of the recommended course of action.

Most antivirus software incorporate data sets that can be revised, typically via an Internet connection to the antivirus vendor, to reflect updated information about malicious programs. The antivirus program may also be updated in this way.

Computer security can be enhanced by combining the use of antivirus programs with physical (controlled access, isolation) or electronic (passwords, filters and firewalls) barriers to external threats.

High Speed Modems

The rate at which modems can transmit data has increased over time. In the early 1990’s, 9600 bps modems became available, and 56,000 bps v.92 modems were standardized in 1998. Later technologies, such as ADSL, support transmission rates of up to 8 megabits per second (mbps). Special modems support communications over coaxial cable.

Built-in Internet Support

Released in 1995, Microsoft’s Windows 95 included a 32-bit TCP/IP (Transmission Control Protocol/Internet Protocol) capability for built-in Internet support and dial-up networking. Windows 95 had a plug and play feature that made it easier for users to install hardware and software. The 32-bit operating system provided enhanced multimedia capabilities, integrated networking, and expanded features supporting mobile computing.

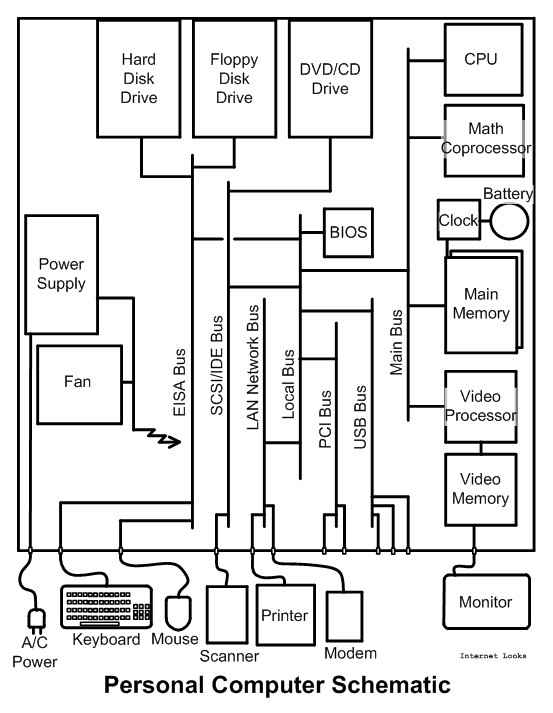

Computer Architecture

Most modern computers have architectures that support their basic function as machines that execute lists of program instructions. Personal computers have a case, an enclosure for protection and handling, with connectors to external devices. Mainframe computers have similar architectures, but are larger and have much more complex and numerous external interfaces and internal components.

Most modern computers have architectures that support their basic function as machines that execute lists of program instructions. Personal computers have a case, an enclosure for protection and handling, with connectors to external devices. Mainframe computers have similar architectures, but are larger and have much more complex and numerous external interfaces and internal components.

A cooling system may incorporate a fan and radiators for heat dissipation, and a power supply connects to a power source and converts electrical power to a form suitable for the various computer components. Heat dissipation can pose major problems in larger mainframe computers, and may require liquid cooling. Electrical connectors distribute power to the computer’s components. In portable computers, an internal battery supplies power for computer operation. A small battery provides power for the internal clock.

The central processor unit is the principal component where computations are carried out. The central processor unit may incorporate more than one microprocessor. Some mainframe computers have thousands of microprocessors. Internal memory is of two types: read-only memory (ROM) which holds permanent data essential for the computer’s operation; and main memory, for temporary storage of data used to support the execution of computer programs.

Data drives, mounted in special bays or trays, include hard drives, CD/DVD drives, and diskette drives. An internal data bus connects the various digital components. In mainframe computers the internal data bus topology can be extremely complex. Input and output ports of appropriate formats provide access to external equipment such as printers, display units, modems, speakers, microphones, video cameras, and data storage devices.

CD Drives

Philips and Sony introduced the CD-DA (Compact Disk-Digital Audio) in 1982. This optical disk digital format stored a high-quality stereo audio signal. CD’s became a huge success in the entertainment market.

In 1985, Philips and Sony developed a technology called CD-ROM (Compact Disk-Read Only Memory) that extended the CD technology for computer usage. The CD technology stores data in about 2 billion microscopic pits arranged in a spiral track on a disk 4-3/4 inches in diameter. The data is coded in binary form and is read with 780 nm wavelength lasers. ISO standard 9660 allows CD data access through file name and directories.

In 1985, Philips and Sony developed a technology called CD-ROM (Compact Disk-Read Only Memory) that extended the CD technology for computer usage. The CD technology stores data in about 2 billion microscopic pits arranged in a spiral track on a disk 4-3/4 inches in diameter. The data is coded in binary form and is read with 780 nm wavelength lasers. ISO standard 9660 allows CD data access through file name and directories.

CD technology was again extended in 1990 to allow computer drives to write data to CD’s. CD’s can store about 700 megabytes of data. Computer CD drives were developed that could not only play CD’s, but could read and write data and audio files to them.

DVD Drives

In 1995, a consortium of electronic equipment and media enterprises announced a standard called DVD (Digital Video Disk), developed principally by Toshiba and having the capacity to store feature-length motion pictures. DVD’s are, like CD’s, 4-3/4 inches in diameter, but use a 650 nm laser and have the capacity to store 4.7 gigabytes of data. A double-layer DVD format was later developed with twice the storage capacity, 8.54 gigabytes. DVD’s began to be marketed in the United States in 1997.

Computer DVD drives support audio, video and data formats that are compatible with devices such as DVD players. Current DVD read-write (RW) computer drives have the capacity to play, read and write to CD’s as well as DVD’s.

Solid State Drives

Working at Toshiba, in 1984 Dr. Fujio Masuoka devised a solid state data storage device using quantum tunneling effects and logic circuits. These flash memory devices offered both long-term data storage and the capacity to be rewritten. That is, data recorded on the solid state memory was retained indefinitely after the device was powered off. When powered back on, the memory could be read any number of times. The flash memory could be erased and new data could be stored in it.

Flash memory offered very good reliability and a combination of read-only memory and random-access memory, but its memory erasure method made it slower than other data storage devices. Its use was originally relegated to special purpose military applications.

In 1998, Sony released an improved form of flash memory in the form of the Memory Stick. This removable device had the capacity to store and retrieve 128 megabytes of data. It was compatible with microprocessor chips in consumer devices. Special software facilitates the interface between the computer and the external flash memory device. The capacity and performance of flash memory devices rapidly increased, and their utilization in personal computers also grew swiftly.

In 2000, Matsushita, SanDisk, and Toshiba developed the Secure Digital (SD) solid state memory card format (1.25 x 0.85 x 0.083 inches) for use in portable devices. Originally, SD cards were available in 32 and 64 megabyte capacities. These and similar portable solid state memory devices have the advantage of providing a convenient means of transferring information (text documents, digitized images, numerical data, etc.) between devices such as photographic cameras, computers, and video recorders/players.

A variety of adapters facilitate data transfer using various portable solid state memory formats in common use. In 2008, SD format memory cards were available with capacities in the 256 megabyte to 32 gigabyte range.

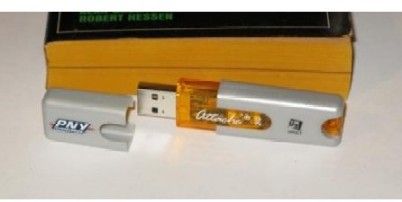

Solid state memory devices such as SD cards interface with computers via adapters which connect to access ports such as Universal Serial Bus (USB) connectors. Portable USB flash drives incorporate a USB connector and a small printed circuit board. Including their protective casing, USB flash drives are typically 2-3/4 x 3/4 x 1/4 inches or smaller in size. They derive the power necesary for their operation from the computer’s USB port. Solid state drives are also available for computer installation in internal bays. Although they have no moving parts, the various forms of solid state drives function like hard disk drives.

Solid state memory devices such as SD cards interface with computers via adapters which connect to access ports such as Universal Serial Bus (USB) connectors. Portable USB flash drives incorporate a USB connector and a small printed circuit board. Including their protective casing, USB flash drives are typically 2-3/4 x 3/4 x 1/4 inches or smaller in size. They derive the power necesary for their operation from the computer’s USB port. Solid state drives are also available for computer installation in internal bays. Although they have no moving parts, the various forms of solid state drives function like hard disk drives.

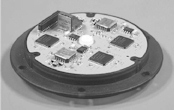

Microelectromechanical Systems

In 1959, Richard Feynman gave a lecture at the California Institute of Technology (There is Plenty of Room at the Bottom) pointing out potential benefits of micromechanics. Thirty years later, the use of embedded computers in conjunction with sensors and effectors was further developed to use miniaturized elements built employing newly-developed microfabrication techniques such as silicon micromachining. Microelectromechanical Systems (MEMS) typically have physical dimensions in the millimeter or sub-millimeter range.

One key aspect of MEMS is the integration of electronic and mechanical functions in a single miniaturized device. MEMS use includes automated sensors, effectors, and mechanisms such as fluidic and optical contrivances. The miniature computers integrated in MEMS devices perform control, regulation, or monitoring tasks for the mechanical components.

One key aspect of MEMS is the integration of electronic and mechanical functions in a single miniaturized device. MEMS use includes automated sensors, effectors, and mechanisms such as fluidic and optical contrivances. The miniature computers integrated in MEMS devices perform control, regulation, or monitoring tasks for the mechanical components.

The more mundane applications of MEMS include devices that sense accelerations indicative of a potentially disastrous crash and automatically deploy an automobile air bag, and those that determine the direction of the gravitational force to drive the right-side-up orientation of the screen display on cell phones. More cutting-edge applications include apparatus such as self-guided target-tracking small munitions, and handheld medical implements able to diagnose injury and disease and recommend optimal treatment protocols.

Special Military Applications

The military pioneered the use of computers and remain at the forefront of many computer technologies. The fastest computers, the IBM Roadrunner at the Los Alamos National Laboratory, and the Cray Jaguar at the Oak Ridge National Laboratory are used for nuclear research and are able to perform more than one quadrillion (one thousand million million) floating point operations per second. These are massive custom-built computers. The IBM Roadrunner occupies 6,000 square feet of floor space, incorporates 19,440 processors, and has 113.9 terabytes (113.9 trillion bytes) of memory. Its operation requires 2,350 kilowatts of power.

For administrative purposes, military use is in most ways no different than civilian use. One difference is an increased emphasis on security. When necessary, security is enhanced by firewalls, passwords, isolation, physical control of access, and electromagnetic shielding. Notebook computers for battlefield use are specially built to use military communication and connection methods and tolerate severe weather and harsh environments.

Special military functions require computers with one or more of the following characteristics: extreme miniaturization, gamma, x-ray and neutron radiation tolerance, extreme reliability, multiple failure tolerance, very high computation speeds, massive data handling capacity, shock tolerance, and tolerance of harsh environmental conditions, including operation in sandstorms, in a vacuum, or underwater.

Special military functions require computers with one or more of the following characteristics: extreme miniaturization, gamma, x-ray and neutron radiation tolerance, extreme reliability, multiple failure tolerance, very high computation speeds, massive data handling capacity, shock tolerance, and tolerance of harsh environmental conditions, including operation in sandstorms, in a vacuum, or underwater.

Work on special applications drives research into diverse disciplines and technologies. There is continued miniaturization of silicon-based microcircuits, as well as development of diamond-based and optical circuit technologies. Miniaturization makes it possible to construct semi-autonomous flying machines about the size of a dragonfly for surveillance and other purposes. Applications such as cryptography drive research into quantum-based computers. Microcircuits using artificial diamonds have advantages of strength, radiation tolerance and superior heat conductivity over silicon-based microcircuits. Integrated optical devices offer the inherent simplicity of all-optical systems, high switching speeds, and tolerance of electromagnetic disturbances. Quantum computers offer advantages in speed for the solution of a class of computationally intensive problems involving probabilities, the factoring of large numbers, or very large matrices. Intelligent computer programs are developed to be covertly inserted in antagonist computer systems to disrupt operations or secretly obtain information.

If these or other new technologies are successfully deployed by the military, they will eventually be broadly adopted or adapted for civilian use. For example, the basic technology developed to disrupt computer operations can be adapted for use in detection of, and recovery from, computer faults.

|